A few months back a well-known sports league approached us, seeking upgraded Portal features...

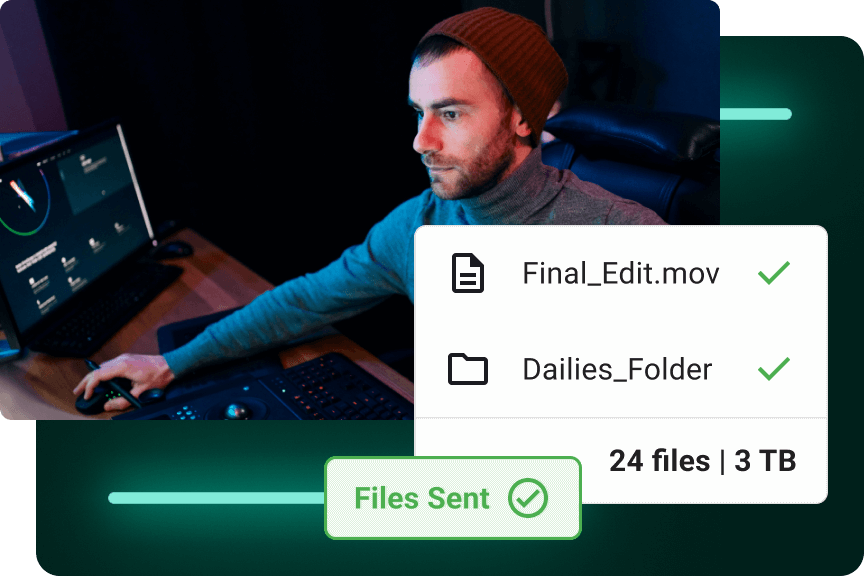

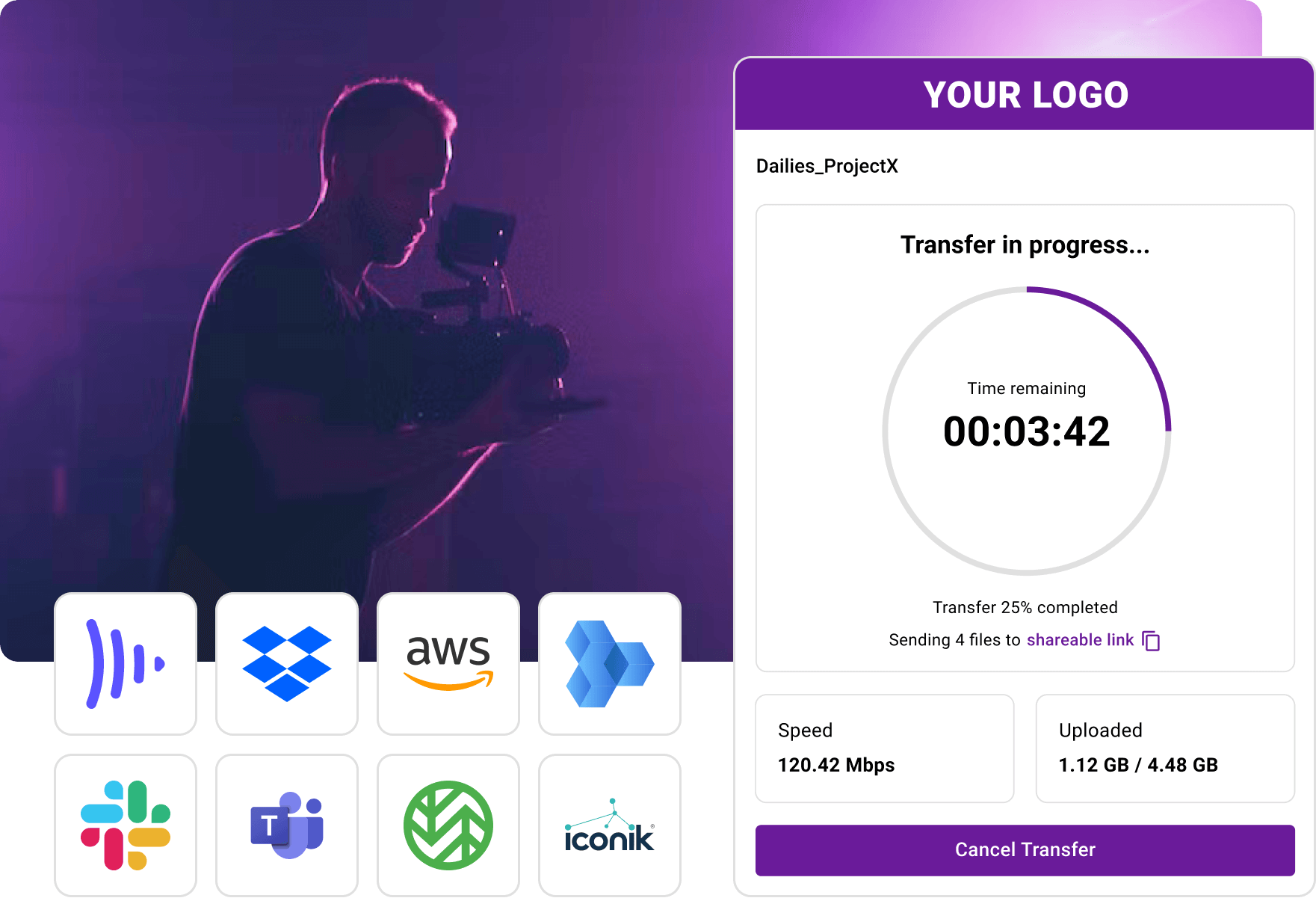

The Fastest File Transfer for Media

MASV is large file transfer, simplified. Accelerate your video workflow with seamless, high-performance file sharing.

Try MASV for free today.

Always Reliable

High-speed transfers with fault protection.

No Learning Curve

Use any browser for easy onboarding and collaboration.

Certified Secure

TPN & ISO compliant with must-have security controls.

Flexible Pricing

Cost savings in the short- and long-term vs Signiant/Aspera.

Say Goodbye to Bottlenecks

Drastically reduce turnaround times with MASV, the leading large file transfer service that ensures lightning-fast uploads and downloads, regardless of file size.

Close the Distance

Bypass the regular slow internet & accelerate with global AWS infrastructure.

Optimize Bandwidth

Control bandwidth usage; maximize for speed or set limits for shared networks.

Ensure Data Integrity

Ensure your files arrive intact with integrity checks & auto-retry of stalled transfers.

Why Our Customers Love Us

MASV is trusted by 9,000+ professionals to streamline their media workflows.

MASV is file transfer without all the stuff that makes file transfer painful.

|

Alan Saunders Dir. of Post-Production Curiosity Stream |

I recommend MASV to everybody. It’s a necessity to survive in this business.

|

Sheila Lynch Operations Manager Hell’s Color Kitchen |

MASV does exactly what it’s supposed to do. It has solved all my file transfer problems.

|

Steve Giammaria Senior Sound Editor Sound Lounge |

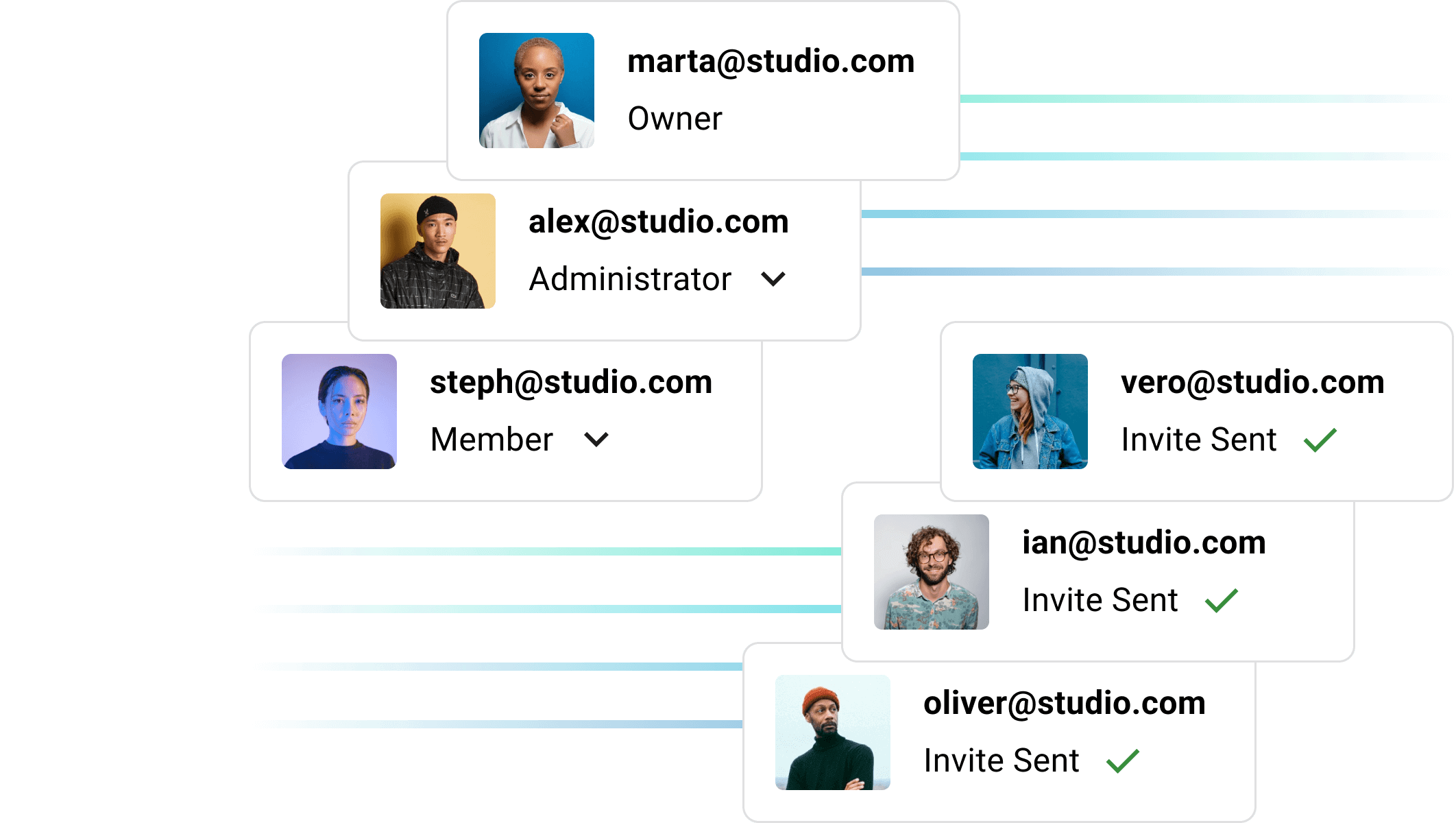

Unleash Your Team’s Potential

Give your team more time for big picture thinking. Start MASV for free.

Pay Per Use Not Per Seat

We charge based on data downloaded, with 10 days of free storage, unlimited user invites, and great savings on prepaid data purchases.

| $0.25: Our standard Pay-As-You-Go rate. | |

| $0.13: Save up to 50% with prepaid credits. | |

| $0.05: Big savings on larger volumes. Inquire > |

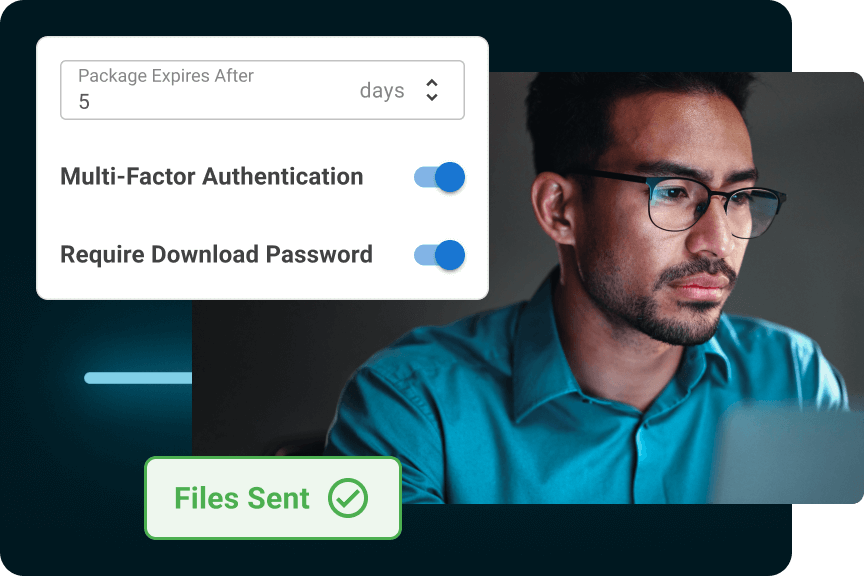

Fortified Cloud Protection

Our secure file transfer platform ensures regulatory compliance, offers robust privacy features, and is built on an encrypted AWS cloud.

Time-Saving Features

Share files faster than ever before with our suite of productivity tools and integrations that customers can’t get enough of.

Easy File Collection

Let clients/contractors upload assets to you without a login.

Our Latest Announcements

Introducing Upload Rules to Standardize Content Collection With File Delivery Specifications

MASV Launches Worldwide Solutions Partner Program

New MASV Partner Program helps Solution Providers Build Faster, More Secure, and Easier File...

MASV Achieves TPN Gold Shield For Cloud Security

Setting the Gold Standard: MASV Secures Top-tier TPN Assessment for Secure Large-Scale Media File...

Undecided? Try For Free.

Start risk-free without a credit card and enjoy 20 GB on us.

Frequently Asked Questions

What is MASV?

MASV is a secure cloud software company designed to quickly transfer heavy media files worldwide to meet fast-paced production schedules. Global media organizations rely on MASV to automatically deliver their large files without any restrictions, allowing them to concentrate on their next big deliverable.

Is MASV secure?

MASV is an ISO 27001 and SOC 2 certified secure file transfer solution. All MASV transfers are encrypted in-flight and at-rest. Users can choose to password-protect every transfer. And MASV is validated by the Trusted Partner Network — a detailed security assessment led by the Motion Picture Association.

Additionally, MASV uses Amazon Web Services which has the best on-premise security in the world.

How much does MASV cost?

MASV offers a usage-based pricing model of $0.25 for every gigabyte downloaded, with a discount available for data purchased in advance. Charges are billed monthly to a credit card on file.

Additionally, MASV offers 10 days of complimentary storage for any file sent or received.

What is the MASV app?

MASV offers both, a browser and free desktop version of their transfer platform. The desktop app provides greater performance and reliability, as well as faster file transfers.

Additionally, the MASV desktop version comes with acceleration features exclusive to the app like Multiconnect channel bonding, priority transfers, and automated workflows.

Does MASV have an API?

Yes, the MASV API lets developers integrate MASV’s transfer capability into their custom workflow to automate file uploads, downloads, and backup files to storage.

Does MASV offer a free sign-up?

Yes, any new user can sign up for free at MASV and gets 20 gigabytes in data credits to use towards their transfers.

Why choose a pay-as-you-go file transfer plan?

A pay-as-you-go (PAYG) file transfer plan is an attractive option for many as it provides flexibility and cost-savings on a case-by-case basis.

Subscription models charge a monthly fee regardless if you use the service or not. These services will often split up their features into separate pricing tiers and impose restrictions on their cheaper plans.

This doesn’t reflect the nature of file transfer, especially for creative professionals who are used to working on a per-project basis.

With MASV’s PAYG transfer plan, users pay a flat fee of $0.25 for every gigabyte downloaded. If a file is not downloaded, the user is not charged, which is great for video teams and freelancers who work in fluctuating project environments. MASV also offers a transfer log so users can invoice individual transfers back to their clients.

Lastly, PAYG ensures users get the best possible speed and performance from MASV. If the file is not delivered, MASV does not make money.

What apps does MASV work with?

MASV offers seamless integration with a number of popular apps. From storage providers like Google Drive, Dropbox, and Amazon S3; to productivity tools like Slack and Microsoft Teams; to media-specific tools like Frame.io and Adobe Premiere Pro.

MASV is designed to complement, not disrupt, your existing workflow. You can view our full list of integrations here.

How does MASV work?

MASV is simple enough for anyone to use. Simply sign up for an account and drag-and-drop your files and folders into either the MASV browser or Desktop app. During upload, you can write a short message to your recipient, set an expiry date for your transfer, and add a password. MASV can send up to 15 TB per file as an email or a share link. You can learn more in this video.

MASV also has a series of tools to help make file transfer faster and easier:

- MASV Portals – create your own upload window to receive large files

- Cloud Integration – store files you receive directly into your preferred cloud storage

- Watch Folders – create upload and download automations

- Multiconnect – bond multiple internet connections to create one strong network

- File Transfer API – create custom workflows with MASV as the ingest and egress tool